From grey goo to grandma's napalm: the ethics of AI and what you can't do with it

For the last 10 years AI has been listed in one of the top ten things likely to end the world. Its also been listed as the answer to some of the hardest problems that are out there, from protein folding to Nuclear fusion.

But, like most things, its pervious iterations are mostly used in serving us more targeted adverts, and occasionally making cars drive on their own. Things changed with the advent to the transducer and OpenAI breaking onto the scene. Or did they?

It’s been 50 years since the AI winter and 10 since the deep learning boom, and their promised paradigm shifts have mostly been in academic circles. What’s not changed is the ethical concerns behind these tools, what has is the access.

Together lets explore where we’ve come from and what lessons have been learned along the way. Make no mistake. AI is the most dangerous technology I’ve worked with, but with the right thinking we can be ethical.

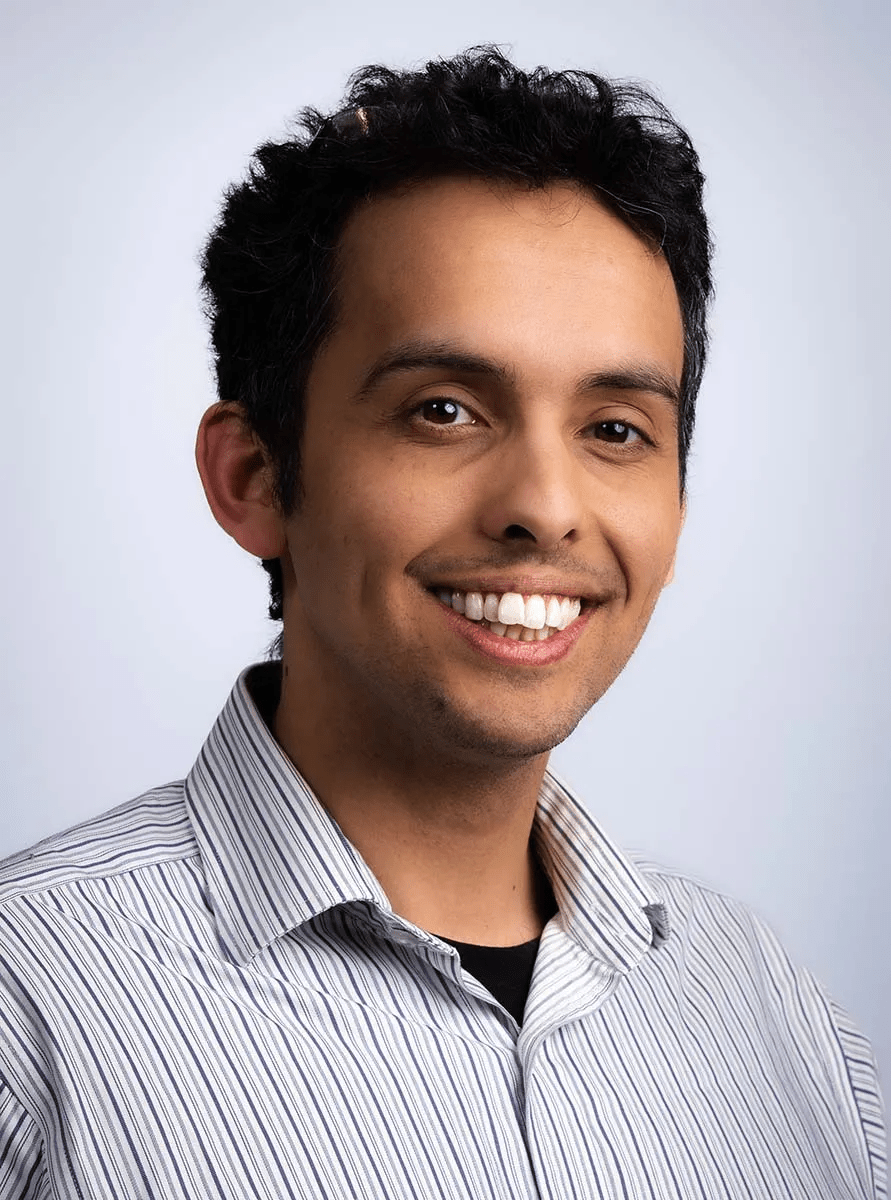

Speaker

Ben Gamble

Ben has spent over 10 years leading engineering in startups and high-growth companies. As a founder, a CTO, a producer and a product leader he’s bridged the gap between research and product ...